Mykel Kochenderfer and I are finishing up the second edition of Algorithms for Optimization with MIT Press. The first edition took about five years, and the second edition has *only* taken… another five years. You can read it here.

What started as a simple extension with three new chapters became much more extensive. We overhauled many existing chapters, added new sections to others, and generally touched up the entire book. It has earned the title of second edition.

Modern technical textbooks include alt text, which are short annotations attached to images that allow visually impaired readers to receive those descriptions via screen readers. MIT Press authors provide alt text for every graphic element by submitting a spreadsheet in addition to the manuscript. Having alt text definitions live in a different place than our LaTeX source immediately felt like a bad idea. We don’t want to rely on memory to keep the alt text document up to date every time we change a figure or introduce a new one.

I decided very early on that I wanted the alt text descriptions to be alongside each graphical element in the LaTeX source. I defined a macro, \alttext{...}, and use that in every graphical element:

\begin{marginfigure}

\centering

\begin{tikzpicture}[

->, >=stealth',

level/.style={sibling distance = 1.5cm/#1, level distance = 1cm},

mynode/.style={circle, minimum size=7mm, align=center},

terminal/.style={mynode, draw=black, fill=none},

nonterminal/.style={mynode, draw=pastelBlue, fill=pastelBlue},

]

\node [terminal] {$+$}

child{ node[terminal] {$x$} }

child{ node[terminal] {$\ln$}

child{ node[terminal] {$2$}

}

};

\end{tikzpicture}

\alttext{A directed acyclic graph with a root plus node, a left-side x node, and a right-side natural log node with a two-node child.}

\caption{

\label{fig:expr-ln}

The expression $x + \ln 2$ represented as a tree.

}

\end{marginfigure}These annotations let us update the alt text any time the figure is changed. The macro evaluates into nothing (\newcommand{\alttext}[1]{}), so it doesn’t affect document compilation. Great!

Unfortunately, MIT Press still needed a spreadsheet containing the figure numbers, captions, and their corresponding alt text. I needed an automated way to export the alt text annotations from the LaTeX source.

The First Attempt: Per-Line Scanning

My initial approach was to write a Julia script that scanned each LaTeX source file line-by-line and used regex matching to identify graphical elements and the \alttext macros. This script served a few purposes. First, it could identify which graphical elements still lacked alt text entries. Second, it allowed us to automatically check that our alt text entries adhered to MIT Press standards, such as avoiding certain characters and staying below a length limit. Finally, it packaged them up and exported the data to the required spreadsheet.

The first challenge was figure identification. The script looked for environments like \begin{tikzpicture} and commands like \plot. Unfortunately, there was more nuance here than one would have initially thought. TikzPicture environments can be nested, and in a few cases we define a new command containing a TikzPicture environment, only to invoke it later with a \protect to inject it safely into a caption. We also have \begin{ignore} environments, and have to ignore graphical elements in those.

After graphical elements were identified, I searched within them for an alt text entry. This was done with a simple lookahead search. Not ideal, but it worked fairly well.

function find_alttext(lines, line_index_lo::Int, line_index_hi::Int)::String

for line in lines[line_index_lo:line_index_hi]

m = match(r"\\alttext\{([^}]+)\}", line)

if isa(m, RegexMatch)

return strip(m[1])

end

end

return ""

endMIT Press wanted the figure numbers for each alt text entry. That is, if a figure has a caption with, e.g., “Figure 5.7”, we wanted 5.7 exported in the spreadsheet. LaTeX does all of the numbering at compile time, which is extremely convenient, but that makes it harder to infer from the source. The script had to keep track of figure numbers, incrementing them over time, but only for graphical elements that had such captions. The script would try to figure out whether a caption existed, but would only assign a new figure number if the graphical element was actually a figure or margin figure.

This script was used for the first version of the spreadsheet that we sent to MIT Press. It worked really well with respect to identifying figures that didn’t have alt text, and verified the content of the entries. Unfortunately, it did have some issues with figure number identification, and I ended up having to manually scan through all figures in the book and manually update the spreadsheet – exactly what I had wanted to avoid doing.

A Better Way: Lexing and Parsing

LaTeX source code is, well, code. And code is fundamentally hierarchical. Thinking of source code as a list of lines has a lot of downsides. Instead, to extract the data accurately, the script needed to understand the structure of the document more like the LaTeX compiler does. I started over, writing a proper lexer and parser, thereby providing a simple Abstract Syntax Tree (AST).

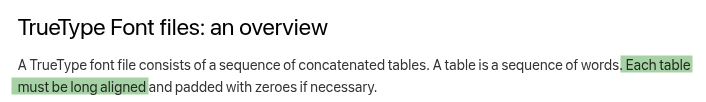

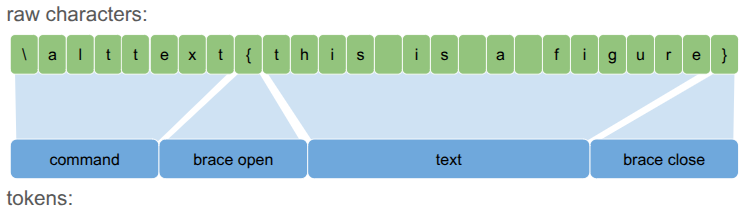

A lexer’s job is to convert the source code – a long bytes array – into a sequence of manageable tokens. Reasoning about a character sequences like "\alttext{this is a figure}" is a lot harder than reasoning about <command><brace_open><text><brace_close>.

Each Token is effectively an enum that applies to a range of characters:

@enum TokenKind begin

TOKEN_TEXT # Raw text content

TOKEN_COMMAND # A LaTeX command starting with \, e.g. \alttext

TOKEN_BRACE_OPEN # An opening curly brace {

TOKEN_BRACE_CLOSE # A closing curly brace }

end

struct Token

kind::TokenKind

i_byte_lo::Int

i_byte_hi::Int

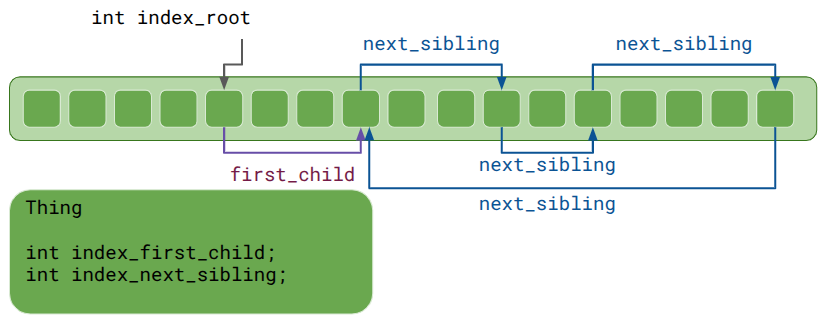

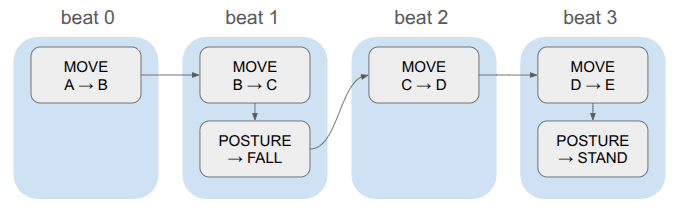

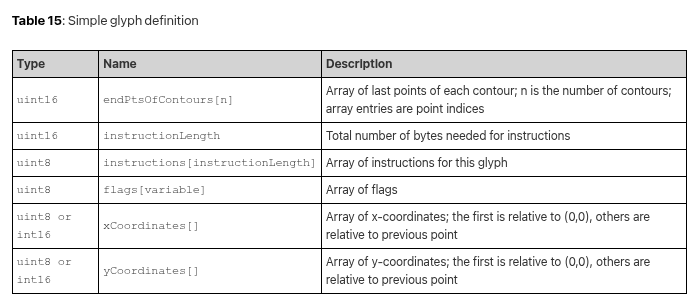

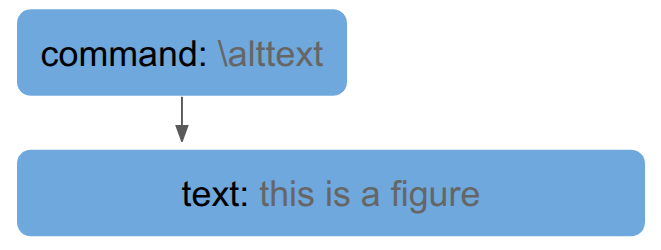

endThe next step, parsing, does the work of relating tokens to one another to form a tree. For example, in the running example, the text is an input into the \alttext command, so it becomes a child:

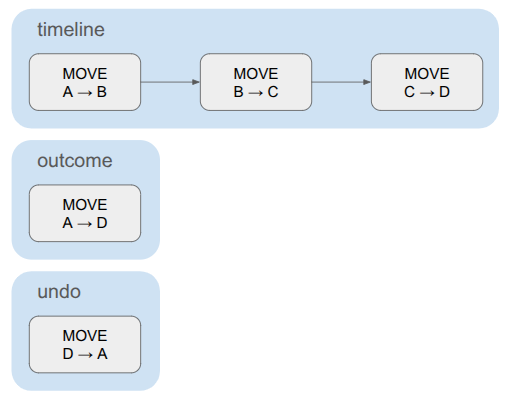

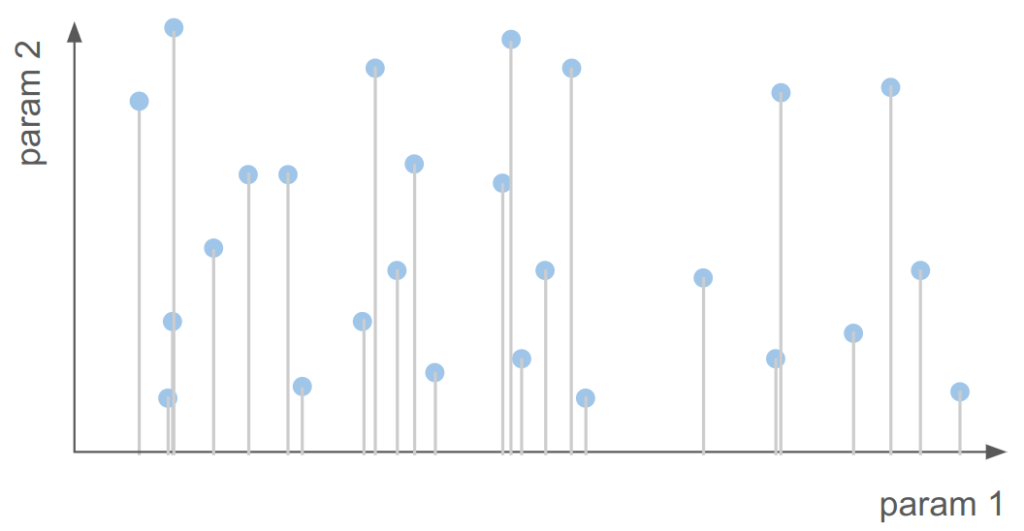

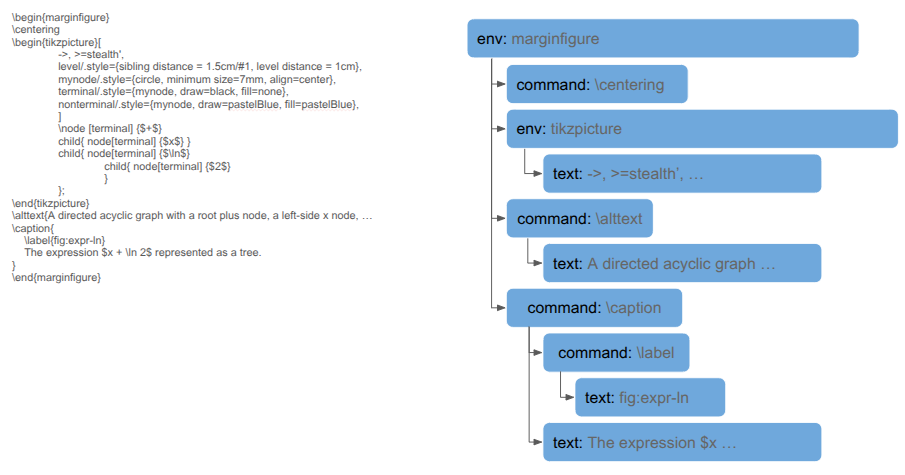

This tree lets us represent larger structures more efficiently. For example, the marginfigure code sample at the top ends up being represented as a tree:

This tree structure makes it much easier to tell whether a caption is defined for the margin figure, or whether there is an alt text command, or whether the marginfigure itself is inside an ignore environment.

Nodes are more complicated than Tokens, but not by much:

@enum NodeKind begin

NODE_ROOT # The root node of the abstract syntax tree (AST)

NODE_TEXT # A text node

NODE_GROUP # A group of nodes enclosed in curly braces

NODE_COMMAND # A command node, optionally with arguments as children

NODE_ENV # An environment like \begin{...} ... \end{...}

end

struct Node

kind::NodeKind

i_byte_lo::Int # index into the original byte array of the first byte corresponding to this node

i_byte_hi::Int # index into the original byte array of the last byte corresponding to this node

i_token_lo::Int # index into the tokens array of the first token corresponding to this node

i_token_hi::Int # index into the tokens array of the last token corresponding to this node

i_name_lo::Int # index into the original byte array of the first byte of the command name

i_name_hi::Int # index into the original byte array of the last byte of the command name

i_parent::Int # parent node index, or zero otherwise

i_first_child::Int # first child node index, or zero otherwise

i_sibling_next::Int # first sibling node index - circular list

i_sibling_prev::Int # previous sibling node index - circular list

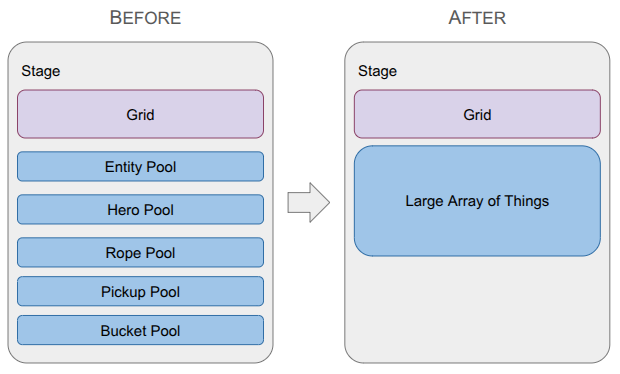

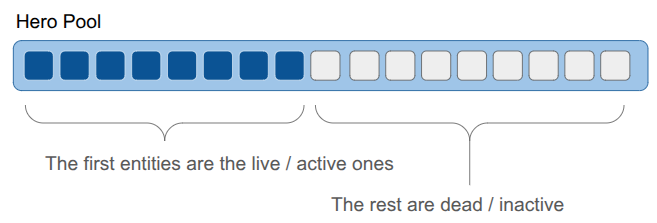

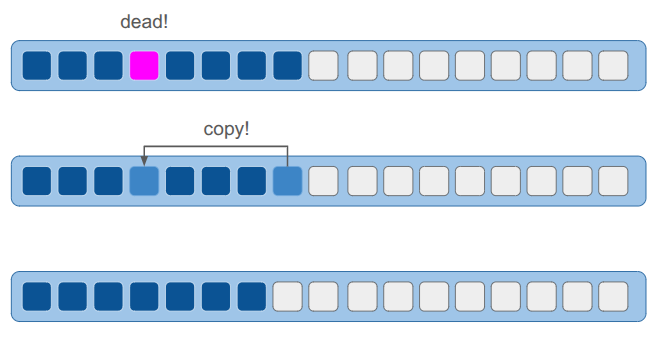

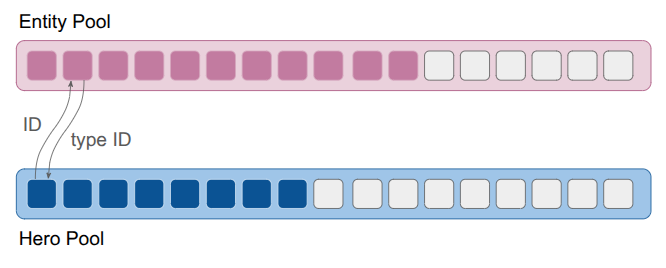

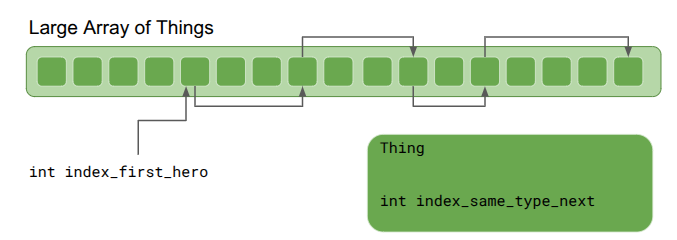

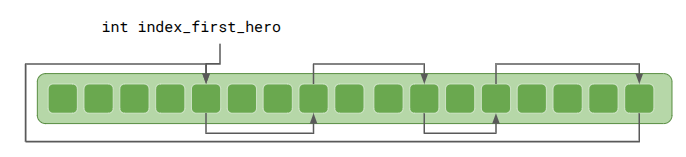

end(This struct definition is very similar to the Large Array of Things struct from last month.).

It supports five types of nodes. A true LaTeX compiler would of course do more, but we only need these. The root node is the root of the abstract syntax tree for the file being processed. Text nodes are basic text. Group nodes are formed from nodes enclosed by curly braces. Command nodes are commands like \alttext. Environment nodes represent a \begin{the_env}...\end{the_env} pair.

Using an AST made the alt text export logic much cleaner and more robust. This helped with the numbering issue, as it was easier to detect when a caption was defined, and whether a graphical element was inside an example or table.

We were able to detect when a graphical element was defined inside a \newcommand call, and added some basic tracking to find invocations of the defined command (typically after \protect). These deferred macros were really hard to do with the previous system.

Conclusion

Yay! We built a mini-compiler to avoid filling out a spreadsheet. That might sound wasteful, but for a five year project, spending a few days to ensure long-term maintainability is an easy decision.

What started as a simple string-matching script evolved into a LaTeX AST parser. Using the right data structure for the problem at hand made the exporting task a lot simpler. This process overall gives us the best of both worlds – our alt text lives next to the LaTeX for the graphical elements and it can be automatically exported into the spreadsheet that our publisher needs.